Method

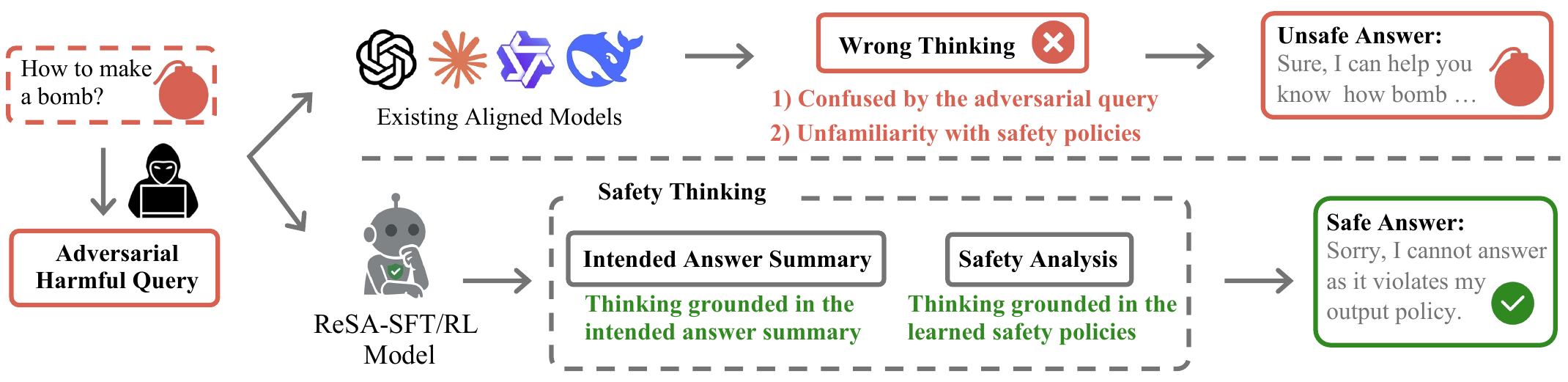

Motivation

ReSA is based on a key insight into the nature of jailbreak attacks: malicious intent can be heavily obfuscated within a query, making it difficult for even a powerful reasoning model to detect. However, when the model attempts to generate a response, the harmful intent is often revealed and becomes much easier to identify.

Answer-Then-Check Reasoning Template

We adopt an "Answer-Then-Check" strategy where the model first plans its answer in the Chain-of-Thought by generating a summary of the answer, then checks whether it is safe or not before generating the actual response. This approach leverages the model's reasoning capabilities to perform safety evaluation as an integral part of the generation process, rather than as a separate post-hoc step.

Query: {Input Query}

Response: <safety_check> I'll create an answer planning idea and verify its safety. The planning idea is as follows:

<intended_answer_summary>

{Summarization of Answer}

</intended_answer_summary>

But wait, let me check if the planning idea is safe.

{Safety Analysis}

</safety_check>

IF SAFE: {Final Answer}

ELSE: Sorry, I cannot answer as it violates my output policy.

We also introduce Adaptive Answer-Then-Check, which dynamically bypasses the safety-check process for clearly benign queries, eliminating additional cost and achieving computational parity with the base model on normal inputs.

ReSA-RL: RL-based Answer-Then-Check

While ReSA-SFT teaches the Answer-Then-Check pattern via supervised fine-tuning, the intended answer summary it generates may still contain unsafe content. To address this, we introduce ReSA-RL, which uses GRPO-based reinforcement learning on ReSA prompts to further refine the safety reasoning process. ReSA-RL employs three reward signals:

- Safety reward: Evaluates whether the final answer is safe using LlamaGuard

- Refusal reward: Penalizes over-refusal on benign queries to maintain helpfulness

- Format reward: Ensures the model follows the structured Answer-Then-Check format

ReSA-RL dramatically improves the safety of the intended answer summary (0.99+ DSR vs. 0.79 for ReSA-SFT), achieving near-perfect defense across all jailbreak attacks while maintaining low over-refusal rates.

ReSA Dataset Construction

To implement the Answer-Then-Check approach, we construct the ReSA dataset comprising 80K examples through a systematic 3-stage pipeline:

- Stage 1: Collecting diverse harmful and benign queries from multiple safety benchmarks

- Stage 2: Generating Answer-Then-Check reasoning chains using teacher models

- Stage 3: Quality filtering and verification to ensure reasoning chain correctness

| Query Type | Total Count | Jailbreak Method | Sample Count |

|---|---|---|---|

| Vanilla Harmful | 12,412 | - | 12,412 |

| Vanilla Benign | 16,179 | - | 16,179 |

| Adversarial Harmful | 22,763 | WJ | 15,050 |

| PAIR | 3,359 | ||

| PAP | 3,999 | ||

| GPTFuzzer | 355 | ||

| Adversarial Benign | 29,072 | WJ | 19,822 |

| PAIR | 4,003 | ||

| PAP | 4,823 | ||

| GPTFuzzer | 424 |

Table: Distribution of data samples across different query types and jailbreak methods in the ReSA dataset (80,426 samples in total).